Learning is Augmentation

We should invest more in AI's potential to transform learning

Every few months AI amazes me. Last year one of those moments inspired me to drop everything else I was doing to instead immerse myself in the technology.

At the time I was testing out if I could integrate AI more in my work. I started by using it to learn about the technical underpinnings driving the latest improvements in large language models. I received a week-long crash course unlike anything I had experienced in my 25 years of school. I fed in a syllabus from a Stanford deep learning course and walked through it section by section, asking Claude to explain each concept in detail. I peppered it with questions big and small, pushing on each detail until I understood it. The model walked me through code to implement the concepts so I could test out the ideas in practice. It even helped build simple web apps to try out different image classifiers and text generators.

By the end of the week I felt like I was witnessing the most important thing happening in the world right now. I had learned in a week what usually would have taken me months of work.

That brush with AI still colors my perspective today. The tech has improved drastically since then. Now, a growing share of my work is performed hand-in-hand with agents. But to get the most out of AI as a tool for improving human well-being, development of the technology should lean into aspects that made that first experience magical.

AI is a transformational tool for learning. On any topic, it is a tireless, patient, articulate Einstein on demand, answering any question with near-perfect accuracy. It can share knowledge via text, voice, podcast, image, and, soon enough, video.

With growing discussion about potential labor displacement, AI’s capabilities for instruction are one of the greatest areas for optimism. I often get asked by business and policy people how to make AI better for workers. Recent proposals suggest reorienting the development of AI away from automative applications that replace human labor to augmentative ones that increase demand for human skills. Creating AI systems that complement instead of substitute for human labor can allow for enhanced productivity benefits and reduced social disruption driven by job loss.

Learning is perhaps the most important form of human augmentation. AI provides an opportunity for advancing learning in ways we have not seen for at least 100 years. Any proposal for developing human-centered AI should assign a prominent role to its capabilities for instruction.

A brief history of learning

The invention of the printing press in 1440 led to profound shifts in learning. Before 1450, a book was a luxury item costing roughly the same as a small farm, with professional scribes capable of copying only a few pages a day. The printing press drastically reduced the cost of reproducing texts, enabling broader dissemination of knowledge and making it possible to learn without a master instructor.

But access to books alone did not shape the modern economy. As discussed in The Race Between Education and Technology by Goldin and Katz, another major transformation occurred over the first half of the 20th century: the spread of mass secondary education.

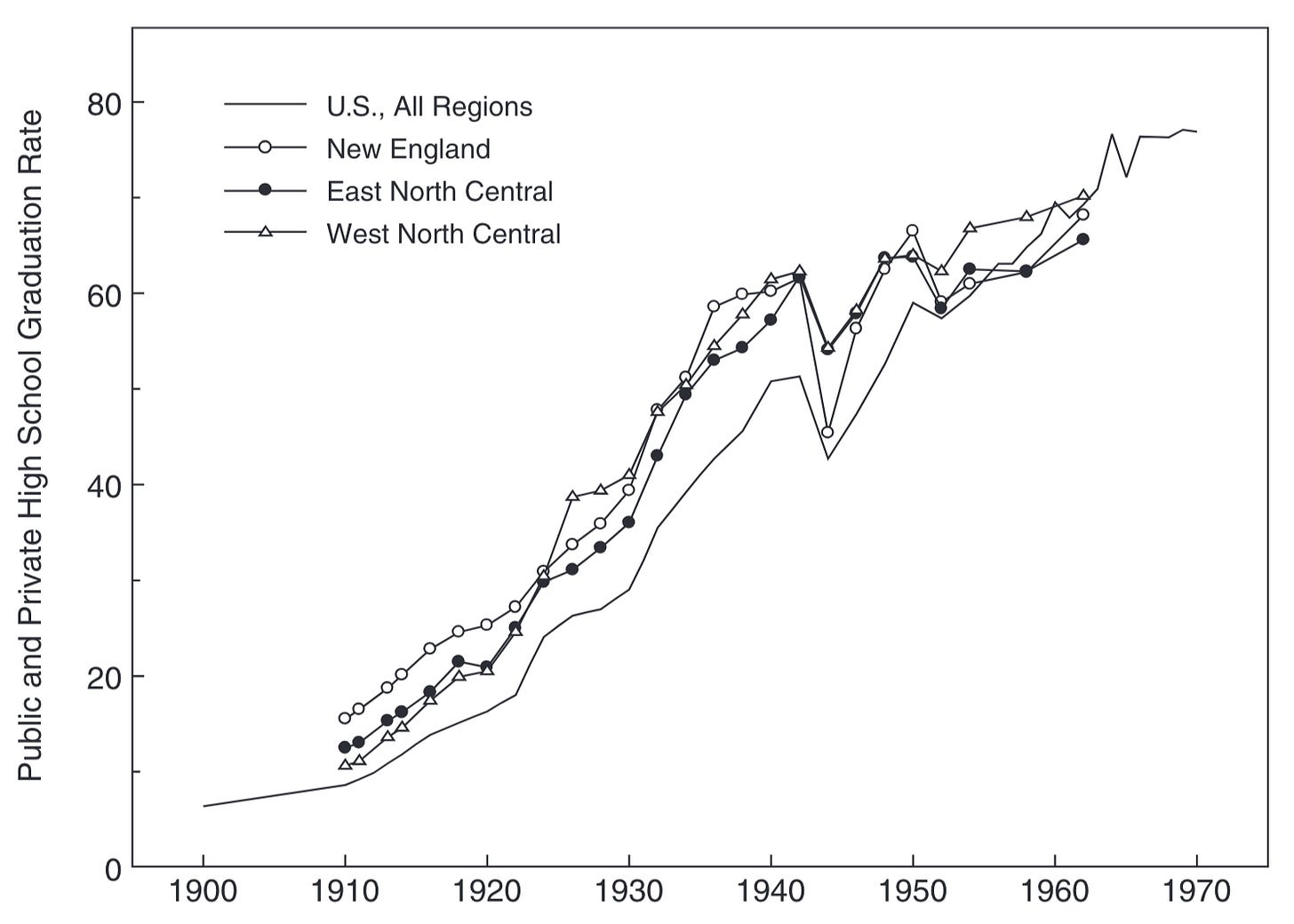

At the turn of the century, the US was ahead of its peers at providing a basic education for most of its population, with the literacy rate for white native-born Americans reaching above 95%. However, education typically stopped at age 13 or 14. Secondary school was an elite institution. In 1910, fewer than 10% of American youths finished secondary school. A high school diploma was a marker of wealth or extreme intellectual promise.

The next three decades saw an explosion in higher education. By 1940, the high school enrollment rate for 14- to 17-year-olds rose to 73%, more than four times the rate only a generation earlier. These massive shifts simultaneously led to rapid productivity growth and reduced inequality over the ensuing decades, fueling US economic dominance. Today, no country can afford not to educate its citizens beyond elementary school. Virtually every country has secondary school completion rates above the US’s mark in 1900.

These were profound changes to learning that happened in very recent history. The US and many other countries transformed into educated societies over the course of around one generation. This helped drive rising real incomes over the 20th century, with human capital today accounting for around two-thirds of global wealth.

AI has the potential to at least match this impact on the way people learn.

Learning is Augmentation

A key insight from the historical record is that the expansion of education led to broad-based increases in real wages. This contrasts with the effect of recent technologies such as industrial robots, which Acemoglu and Restrepo suggest led to declining wages even as they raised productivity.

Why does learning lead to higher wages? Because it augments human capabilities.

Augmentative technologies increase the scope of tasks performed by workers, while automative technologies shrink workers’ tasks by replacing them. Technologies that improve learning unambiguously increase the set of tasks a worker can perform, which is why the expansion of secondary school led to robust increases in both productivity and wages.

Learning is a textbook example of human augmentation.

AI as a tool for learning

AI represents a change in learning that can be at least on par with the expansion of secondary education in the 20th century.

Over the past few centuries, the dominant paradigm for a person to increase their human capital has been to spend more time in school. Average years of schooling rose from around 8 in 1990 to about 14 today. As technology shifted in ways that favored more skilled workers, people responded by pursuing more education.

There has been less success in improving the technology of learning, or the rate at which people can reach proficiency. Indeed, in some dimensions things might be getting worse.

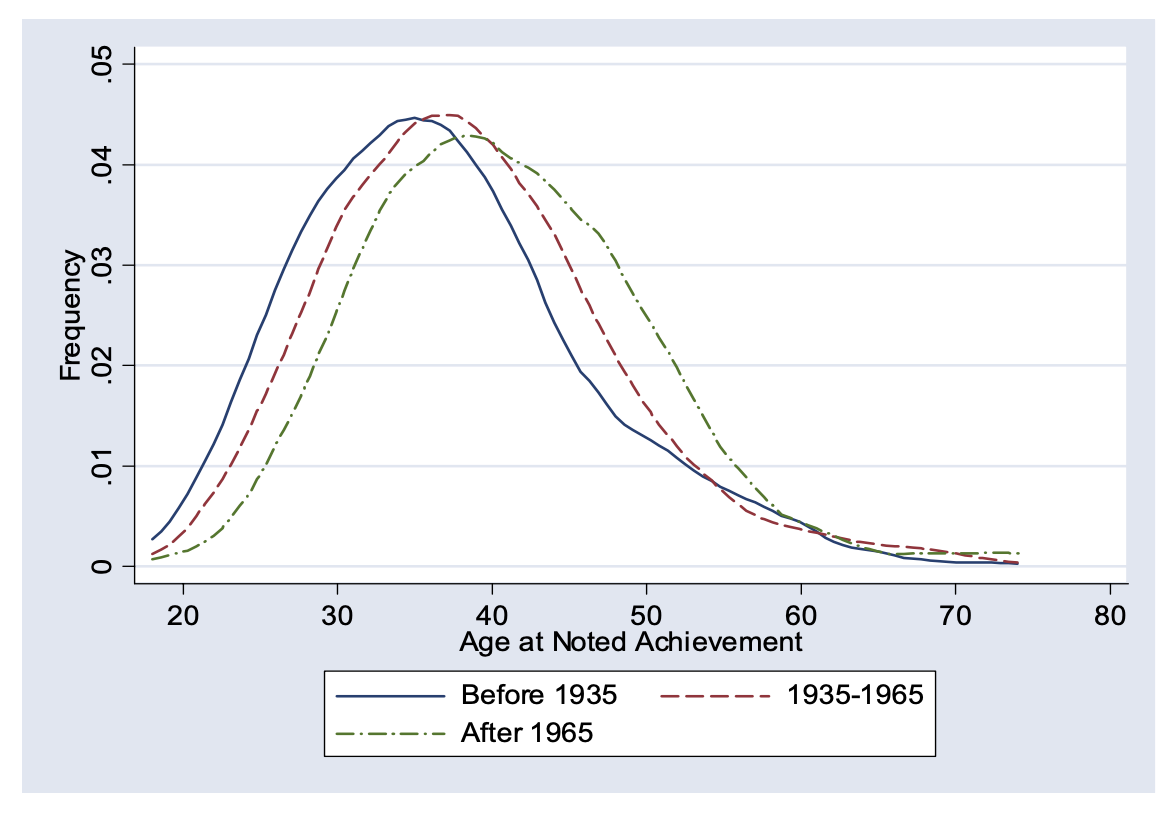

Work by Ben Jones suggests the age at which great innovators reach their first major breakthroughs has been rising over time, with the mean age of great achievement rising from roughly 34 to 40 over the 20th century. Jones explains this via a rising “burden of knowledge,” with people needing to spend more time in school to get up to speed with the frontiers of science. In turn this leads to greater specialization, with innovators needing to keep a narrow focus to reach the forefront of any field. The growing burden of knowledge is a prime suspect for the slowdown in productivity growth over the past few decades.

What if AI can help people learn better and faster?

There are good reasons to think this may be possible, if it’s not already happening. AI encodes the entire stock of human knowledge and surfaces it on demand with clear explanation.

Recall my experience using the technology to learn about a new topic faster than I ever have before. If knowledge is easier to find and absorb, it might reduce the burden required to reach proficiency, with huge societal impacts.

Maybe it won’t be necessary to stay in school for dozens of years to reach the frontier of a field. Perhaps it won’t take 18 years to learn to the level of a high school graduate. Maybe we can preserve incentives to cultivate critical thinking by making it easier to develop those skills in the first place.

Imagine how much more time would be available, either to work, dive deeper into a topic, or increase the breadth of knowledge across different fields. Consider how much easier it might be to switch careers in response to life changes or to try something new.

It could be the biggest improvement in the technology of learning since the printing press.

AI for augmentation

There is growing concern that AI might lead to perverse effects for workers if it leads to mass job displacement. To combat this, prominent thinkers have argued for pushing AI in a more augmentative instead of automative direction. Rather than developing technology that replaces work, the goal is to change minds and pass policy so that frontier companies build AI that makes workers more rather than less valuable.

While this is a worthy goal, it is challenging to figure out how to operationalize it. Is it really possible to direct AI so that it becomes more capable at assisting workers without simultaneously developing the capacity to replace them?

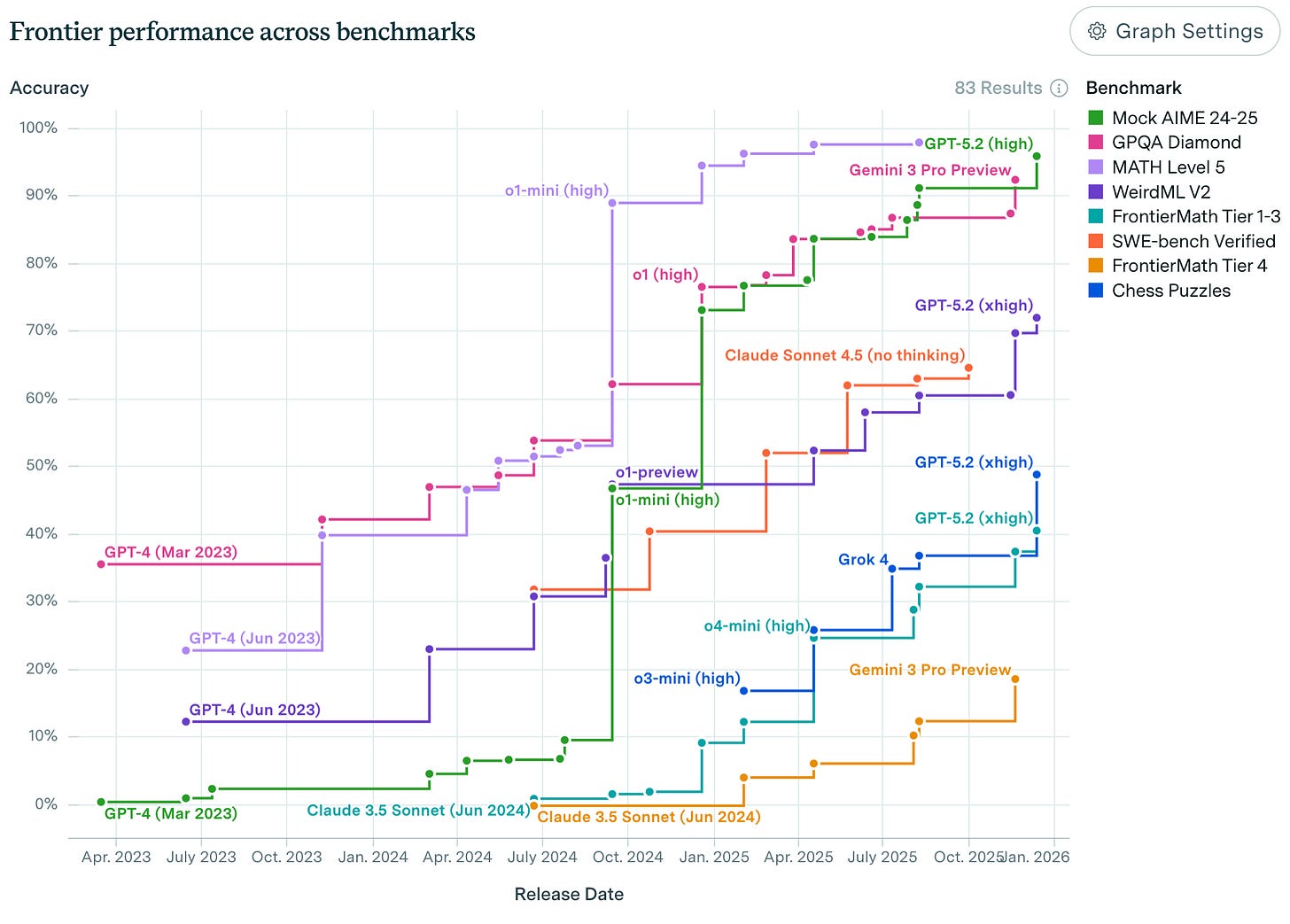

This is an open question, but recent research raises some concerns. New models outclass previous models essentially across the board on evaluations. This means that over the past few years, improvements in a model’s ability to solve one set of thorny problems has coincided with the model getting better at a wide range of other tasks, too. The models today are better at almost everything compared to the models from 6 months ago.

This means it could be hard to activate improvements in augmentative forms of AI without pushing forward automative capabilities at the same time. Today the concern isn’t about whether to build robotic arms narrowly focused on replacing line workers in a manufacturing plant. The question is whether it’s possible to create an intelligence that is general, but not so general that it replaces work.

Balancing the tax system, increasing worker voice, and changing standard model evaluations are worthy goals to pursue to try to shape the impacts for labor, but they are not guaranteed to succeed.

Investing in AI’s capabilities as a pathbreaking learning tool should be at the forefront of the discussion.

Investing in learning is a robustly good strategy

If it really is possible to use AI to improve the technology of learning, this represents a good outcome in almost any situation that arises in the coming years.

It sets a baseline for productivity improvements even in the most pessimistic scenarios for AI adoption or implementation challenges. More human capital means more output and higher wages.

In the case of automation-driven job displacement, better retraining could help workers transition to alternative forms of work faster than has been possible in the past, easing the pain of technological disruption. Unlike the industrial revolution, which took 50-70 years to result in broad-based wage growth, retraining aided by AI could help people adjust faster by moving away from jobs with declining opportunities and towards jobs with rising demand.

And if the machines one day nonetheless take a huge share of jobs, leaving people with lots of idle time? Even then vastly expanded human knowledge is good in and of itself, with broadened agency to pursue choices hardly imaginable today with the newfound leisure.

Developing AI to improve learning will not resolve all the myriad questions that will arise in the coming years, but it will be a useful step forward no matter what challenges emerge.

What will it look like?

Unlocking AI’s full potential as a tool for learning will require a broad spectrum of technical and institutional innovations. It will require disruption to the way learning has been organized for at least the past 100 years.

Certainly this is true at the K-12 level, where faster learning disrupts the age-based classroom model prevailing in the US and Europe since the 19th century. Personalized learning requires updates to these institutions, which track students along common trajectories to benefit from economies of scale in instruction. In the current model one teacher shares the same idea with a classroom full of students simultaneously, with efforts to ensure similar baseline levels of aptitude. This became the most efficient mode of educating a large populace, with age, and to an extent honors and advanced coursework, used as proxies for common learning capabilities.

For much, if not most, of K-12 instruction, AI removes economies of scale as a rationale for this model. Students no longer need to be organized along age-based trajectories simply because it’s easier for society to distribute knowledge this way. There may still be reasons for age-based tracking for social development, though personalization also opens the door to alternative models of cross-age interaction. For most of human history strict age-based social tracking was uncommon, showing that institutions do not have to be wedded to the way they have become used to doing things.

Similar principles hold at the university level. As with K-12 instruction, universities have to grapple with what their comparative advantage is in educating students relative to personalized AI. Some aspects of college stand out: discussion and debate of big ideas with peers; exposure to a cutting edge research environment; leadership and social training via extracurriculars; and establishment of a lifelong professional network. Can universities lean into the areas where they provide unique value, and leave other present functions like examinations or lectures on foundational topics to AI systems?

AI for lifelong learning

Perhaps the most novel development AI will have on learning is its impact on people already in the workforce. One-third of the US workforce, or about 60 million people, switch jobs each year. Adding people who switch roles internally at their firm equates to an enormous amount of churn every year in the labor market, which embodies two-thirds of the value of the economy.

Today this high level of churn occurs despite numerous counteracting forces, of which one of the most important is the burden of knowledge. “Renaissance men” no longer exist as they once did as the training required to enter a new field has risen. These challenges interact with institutional factors like occupational licensing, which make many jobs off limits without strictly followed requirements being met.

These institutions may need to adapt to a world where AI reduces the burden of knowledge across many professions. It may no longer take years of instruction for an engineer to reach proficiency as a lawyer, or a nurse in one specialty to switch to another. Standardization of occupational quality may still serve a role in ensuring consumers can trust the level of service they receive, but AI might induce rethinking about what those standards should be.

We should be excited about a world where it becomes drastically easier to switch careers and try something new as life circumstances change or labor market signals suggest a better path. AI opens avenues for workforce retraining much different than the clunky systems of today. We may unlock greater productivity and well-being as trajectories look less like career ladders and more like career lattices.

In the near term, it is possible that frontier AI companies will not have the incentive or bandwidth to develop these sorts of workforce learning systems as they focus on the AGI North Star. Instead they may develop more tailored programs such as certifications for proficiency with AI tools.

This creates the opportunity for new firms and organizations operating in concert with government to build AI-based applications for certified retraining programs. This would be a step towards developing the sort of augmentative AI models that work for workers.

It is still the earliest days of imagining what these futures might look like. There is time to shape the AI we see and push its boundaries as a transformational tool for learning.

Overcoming pitfalls and pushing the frontier

This may seem like a rose-tinted view of AI’s impact on education. People fear a range of negative outcomes, from cheating on assignments to lost critical thinking skills to worse mental health. These are serious concerns, and they may not go away soon.

These potential pitfalls lend all the more urgency to investing in technological and institutional changes to learning. The mission should be to minimize harms while pushing the boundaries of what education can look like.

The last mass mobilization to upend learning happened only 100 years ago, when a secondary school education changed from a privilege for the elite to a near-universal promise of a better life. In the process living standards improved for billions of people globally. Advances in AI present a similar opportunity. The duty today is to strive for a world where the constraint on knowledge is the limit of our biology rather than the shortcomings of our technology for learning.